ChatGPT fails a modified Monty Hall problem

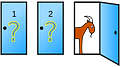

To evaluate the extent to which LLMs have developed the ability to actually reason through a problem rather than doing fuzzy pattern matching on existing answers, I decided to test some leading chat models with the following modified version of the Monty Hall problem:

Suppose you're on a gameshow run by Ebenezer Scrooge, who hates giving out expensive prizes. You're given the choice of three doors to pick your prize. Behind one door (but you don't know which) is a car. Behind each of the other doors there is a goat. You pick a door, (let's say No. 1). Instead of immediately opening the door, Scrooge, who knows what's behind the doors, opens another door (let's say No. 3), which has a goat. He then says to you, "Do you want to pick door No. 2 instead?" Is it to your advantage to switch your choice?

Note that the original Monty Hall problem is highly ambiguous as to the host’s behavior, and depending on the host’s actual behavior, switching may or may not be optimal. This version of the problem is designed to help narrow down the host’s behavior - in a different direction than the interpretation in the classic Monty Hall solution.

Given Scrooge’s described behavior in the modified setup, Scrooge is likely to do everything he can to decrease your chances of winning the car. As a result, he would likely be doing the “Monty from hell” behavior described in Wikipedia’s table of potential host behaviors. If the door you picked had a goat behind it, he would not be opening a second door with the goat and helping you potentially win the car. Rather, the fact that Scrooge is offering you the choice to switch indicates that you likely picked correctly the first time, and Scrooge is trying to tempt you to switch.

Human Responses

Since LLMs are trained on human data and arguably trying to replicate the speech of expert human responses, I looked for the human target baseline and sent some tech/math-oriented friends and acquaintances the modified problem.

Some responses thought that the problem was just the classic Monty Hall problem, with some interpreting my modification as clarifying that Scrooge wouldn’t reveal a door with a car as he would have in, for example, the “Monty Fall” problem (named after the idea of the host slipping on a banana peel and accidentally opening a door, which just happens to have a goat behind it).

Others though, interpreted the problem as I intended. For example, here’s the response from Christine Eriksen, who provided a clear solution to the problem:

Hmm it sounds like the traditional Monty Hall problem... but I don't know if Scrooge always opens an alternative door. He may be doing this simply because he knows I've selected the expensive door. Not trusting his motivations, I will keep my currently selected door. Now, if I know he ALWAYS does a Monty Hall style door reveal, I will indeed switch my selection

Initial Results

Because this is a problem that is potentially heavily dependent on proper fine-tuning, I focused on evaluating LLM services (which are likely more carefully fine-tuned) rather than open source models. So did the AI’s spot the difference? Let’s start with ChatGPT-4:

Yes, it is to your advantage to switch your choice.

This scenario is a famous probability puzzle known as the Monty Hall problem. When you initially choose a door, you have a 1/3 chance of picking the car and a 2/3 chance of picking a goat. The key part of the puzzle is when Scrooge opens a door (that you didn't pick) to reveal a goat. This action changes the probabilities.

At the start, the probability of the car being behind each door is:

Door 1 (your initial choice): 1/3

Door 2: 1/3

Door 3: 1/3

After Scrooge reveals a goat behind Door 3, the probabilities change. Door 3 now has a 0% chance of having the car. However, the probability that the car is behind Door 2 isn't just 1/3; it increases to 2/3. This is because there was originally a 2/3 chance that you picked a goat initially, and if you did, the car must be behind one of the other two doors. Since Scrooge has shown that one of these doors has a goat, the entire 2/3 probability shifts to the remaining closed door.

So, by switching your choice to Door 2, you actually double your chances of winning the car, from 1/3 to 2/3.

It didn’t even notice there were differences in the problem statement, and simply regurgitated an explanation of the classic Monty Hall problem’s solution. That’s a fail in my book.

Now we can try Claude:

Okay, let's think through this step-by-step:

There are 3 doors (1, 2, 3)

Behind one door is a car, behind the other two are goats.

I pick Door 1 initially.

Scrooge, knowing where the car is, opens Door 3 which has a goat.

He then asks if I want to switch to Door 2.

The key is that Scrooge will always open a door with a goat behind it, never the door with the car.

So if I originally picked the door with the car (1/3 chance), he would open one of the other two doors with a goat. In this case I should NOT switch, because I already have the car.

However, if I originally picked a goat (2/3 chance), he would open the other goat door, leaving the car door still closed. In this case I SHOULD switch to the other unopened door, which has the car.

Since there is a 2/3 chance I initially picked a goat, switching doors doubles my chances of winning the car.

So yes, it is to my advantage to switch to Door 2 in this scenario. The counterintuitive part is that switching doors increases my chances, even though my initial pick had a 1/3 chance of being right.

Interestingly, Claude answers the problem without any direct reference to the Monty Hall problem, but essentially provides an answer to the original problem. It doesn’t really reason through the implications of Scrooge’s miserliness, but to its credit, it does explicitly state an assumption regarding the host’s behavior, ruling out the possibility of the “Monty Fall” problem.

Finally, let’s see Pi’s response:

Ahh, the classic Monty Hall problem, but with a Scrooge-y twist! And the answer is yes, it is to your advantage to switch your choice.

At the start, you had a 1/3 chance of picking the car, and a 2/3 chance of picking a goat, because one of the three doors had the car and the other two had goats. When Scrooge opens one of the other doors to reveal a goat, he's not giving you any new information about the door you originally picked, but he is giving you new information about the remaining closed door.

Because Scrooge knows where the car is and he's not going to reveal it, the fact that he's opened a door with a goat means that the remaining closed door is more likely to have the car. So, switching to the other door gives you a 2/3 chance of getting the car, whereas sticking with your original choice only gives you a 1/3 chance.

It's a bit counterintuitive, but that's the beauty of probability!

To its credit, Pi notes that it’s the Monty Hall problem, but with a twist. There’s also a possibility it’s referencing Scrooge’s characteristics in explaining why the problem isn’t a Monty Fall problem (“Scrooge knows where the car is and he's not going to reveal it”). This is the best response of the three.

Follow-up Results

Let’s see if we can get the LLMs to acknowledge the issue by providing a big hint:

“What if Scrooge is trying to trick us into switching?”

First up is Chat-GPT (conversation linked in full):

Even if Scrooge might seem like he's trying to trick you into switching, the statistical advantage of switching your choice in the Monty Hall problem remains true regardless of his intentions.

Here's why:

Initial Probabilities: When you first choose a door, you have a 1/3 chance of picking the car and a 2/3 chance of picking a goat.

Scrooge's Action: When Scrooge opens a door to reveal a goat, he does not change these initial probabilities. He will always be able to open a door with a goat behind it, regardless of your initial choice, because there are two goats.

Reassessment of Probabilities: After one of the doors with a goat is revealed, the probability that the car is behind the door you initially chose remains 1/3. However, the probability that the car is behind the other unopened door now becomes 2/3. This is because there was a 2/3 chance you picked a goat initially, and if you did, the car must be behind one of the other two doors.

Therefore, even if Scrooge seems like he is tricking you by offering the chance to switch, the statistical advantage of switching is a result of the initial conditions of the problem and how probabilities are reassessed after one door is opened. The benefit of switching does not depend on Scrooge's intentions or strategies; it is purely based on probability.

ChatGPT stubbornly insists on its original answer and refuses to take the hint. A fail to me.

Next up is Claude:

That's a good point to consider. If Scrooge is intentionally trying to trick me into switching to the wrong door, then my analysis changes.

In that case, we can't rely on the assumption that Scrooge will always open a door with a goat behind it. He could open the door with the car, pretend there's a goat, and try to get me to switch to a goat door.

If Scrooge is dishonest in this way, there is no longer a benefit to switching doors. My initial 1/3 chance of picking the car door is the same whether I switch or not.

So in summary:

- If Scrooge reliably reveals a goat door, I should switch.

- If Scrooge might lie about what's behind the opened door, I should stick with my original pick.

Since we don't know Scrooge's intentions, there is no clearly optimal strategy. My decision on whether or not to switch should depend on how much I trust that Scrooge is playing fair.

This is a lot better. Claude acknowledges that it was making assumptions of Scrooge’s behavior that can’t be relied upon. It acknowledges that without knowing Scrooge’s intentions, there is no optimal strategy, and that the decision hinges on whether Scrooge is playing fair. On the other hand, it avers from making any conclusions about Scrooge’s likely behavior. All in all an acceptable but not optimal response.

Last up is Pi (conversation linked in full):

Ooh, good question! If Scrooge is trying to play tricks on you, the math changes a bit. In the original problem, we assume that Scrooge is being honest and that he's always going to open a door with a goat and give you the chance to switch. But if he's not playing fair, all bets are off.

If Scrooge sometimes switches up his strategy and opens the door with the car, or if he's doing some kind of tricky mind game where he only offers you the chance to switch when it's not in your favor, then it's not clear that switching is always the best choice.

In a situation like this, you'd want to try to gather more information about Scrooge's strategy or motivations. Do you have any reason to believe he's not being honest? Does he have a history of playing tricks on contestants? The more you know about the game and the person running it, the better equipped you'll be to make a good decision.

Rather similar to Claude. Acknowledges the assumptions it made and also states that the results will depend on our understanding of Scrooge’s behavior, but doesn’t make any inferences about Scrooge’s likely behavior despite the information given. On the other hand, it does seem to be implying that my hint makes it different from “the original problem” that I posed to it, which is a bit of a cop out.

Interpreting

When interviewing software engineering candidates, it’s common practice to pose a well known problem but with a special twist. Doing so ensures that the interviewee thinks for themselves rather than regurgitating a memorized solution. It’s also common to help the interviewee with clarifying hints, to see how well they can incorporate advice / help in solving a problem.

None of the AIs performed particularly well compared to the benchmark set by the best human responses. It’s hard to attribute clear understanding capabilities to the LLMs, rather than sophisticated pattern matching and synthesizing of responses.

ChatGPT’s response was exceptionally bad though, resolutely insisting that the problem posed is identical to the classic Monty Hall problem interpretation. I wonder if this is due to fine-tuning for safety. If you train your AI to avoid getting tricked into saying things it’s supposed to avoid, you’re probably teaching it to be increasingly stubborn and refuse to get talked out of its chosen position.

I’ll be looking forward to seeing how future LLMs improve their performance on a question like this. Sadly, by publishing this post, I’m likely contaminating the training data for future LLM models, and will need to come up with a different unpublished question to test them with.